Robots.txt Optimization

Overview

In the highly intricate and continuously evolving landscape of SEO , a well-optimized robots.txt file stands as a silent guardian, wielding considerable influence over how search engines interact with your website’s content, and mastering its optimization is crucial for any digital marketing strategy aiming for heightened visibility and refined crawling efficiency. The robots.txt file, a simple yet potent text document located at the root of your domain, commands attention because it serves as the primary directive point for search engine bots, essentially instructing them which pages to crawl and index, while denoting boundaries for areas of your site that should remain undiscovered, thus averting any potential dilution of topical authority and safeguarding your most vital content from being overlooked. In the realm of robust SEO frameworks, understanding the granular functions of the robots.txt file helps to mitigate crawl budget wastage, where search engines may inadvertently spend precious resources on non-essential content, such as login pages or duplicate content, which can severely convolute your indexing strategy and stymie the enhancement of your site’s overall ranking potential.

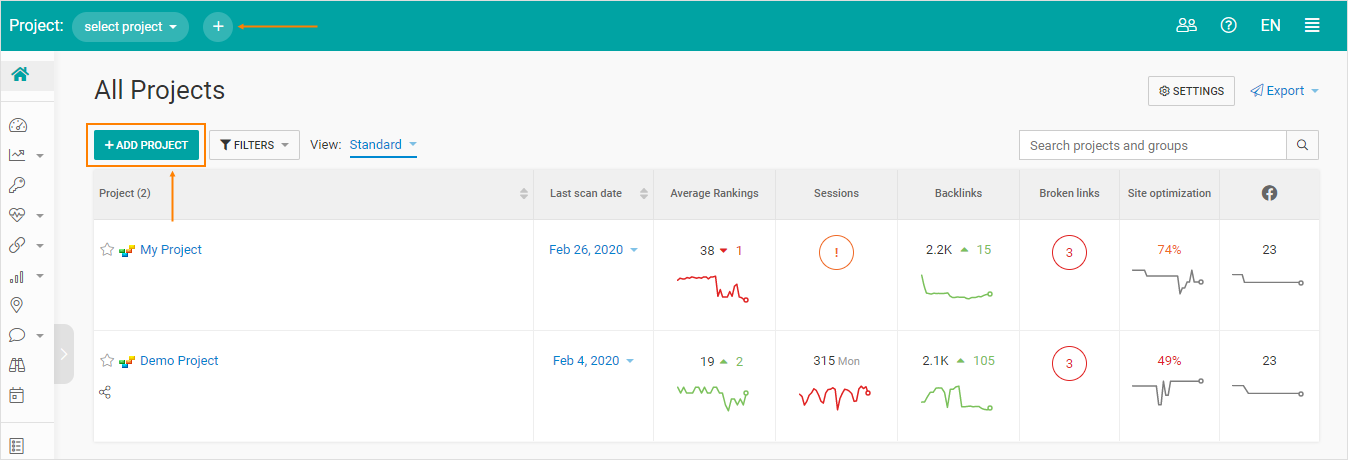

Optimizing this asset requires a strategic approach, beginning with a thorough analysis of your website’s architecture through the CGM SEO Tool, which will assist in identifying high-priority pages and sections that merit inclusion in your crawl directives while flagging areas that necessitate exclusion; this meticulous process involves a comprehensive audit of your site’s content, as part of a broader content architecture initiative that aligns with your digital marketing goals and audience intent. An essential yet often overlooked aspect is the proper syntax and formatting of the robots.txt file; for example, using "User-agent: *" to apply directives to all bots, followed by "Disallow: /private-directory/" or "Allow: /public-directory/", ensures a clear channel of communication with search engines, thereby facilitating optimal crawling behavior, while avoiding any syntax errors or misconfigurations that could inadvertently block critical content from being indexed. It is equally critical to prevent overlap and ambiguity in directives; for instance, if you are specifying that a particular subdirectory should be disallowed but have secondary directives allowing certain files under that same path, you could create logical conflicts that mislead crawlers and undermine your SEO efforts, thus regular reviews and updates to your robots.txt file, especially after significant changes in site structure, content strategy, or seasonal campaigns, become non-negotiable as part of your ongoing SEO maintenance.

Moreover, it is important to recognize that the robots.txt file does not serve as a security mechanism; sensitive or confidential files should be adequately protected through server-side measures rather than sole reliance on robots.txt as bots may still access disallowed content directly if they are already aware of its presence, thereby accentuating the importance of an integrated approach to both SEO and cybersecurity. Additionally, leveraging tools such as the robots.txt Tester within Google Search Console can offer real-time feedback on how search engines interpret your directives, enabling intricate adjustments tailored to enhance crawl efficiency and site performance effectively. Understanding the impact of robots.txt optimization extends into schema implementation and the broader role it plays within your site’s overall SEO efficacy; for instance, appropriate use of the canonical tag alongside disallowed directives can further demarcate your primary content pages to both users and search engines, solidifying your message of importance and driving user engagement.

As voice search and mobile-first indexing continue to reshape the digital landscape, acknowledging how these trends influence crawling behavior and subsequently informing your robots.txt strategy becomes imperative; ensuring your mobile site is correctly indexed while preventing outdated versions from hindering your SEO progress is a prime example of the dynamic interplay between technical SEO elements. Furthermore, in a world where search algorithms are increasingly sophisticated and capable of understanding intent and context, it may be beneficial to consider how to use robots.txt files in conjunction with other optimization tools and strategies, fostering a holistic approach that incorporates not just technical elements but also content strategy, user experience, and digital marketing insights to solidify and enhance your authority within your niche. Ultimately, crafting a finely-tuned robots.txt file as part of a comprehensive SEO strategy will not only augment your site’s crawl efficiency but will also bolster your overall search presence by ensuring search engines focus on your most valuable content, thus refining your site's relevance and visibility in an ever-competitive digital marketplace.

Regular assessments and strategic modifications of your robots.txt based on performance analytics, shifting algorithm criteria, and user search behavior will perpetually enhance your SEO framework, firmly establishing your site as an authoritative source within your niche while paving the path for long-term ranking success and sustainable organic reach.

Frequently Asked Questions

Quick answers about Robots.txt Optimization.

Robots.txt Optimization covers the key principles and strategies used to improve search visibility and marketing performance.

It helps businesses increase organic traffic, improve rankings, and build long-term digital authority.

Apply proven best practices, monitor analytics data, and continuously refine your strategy for better outcomes.